Hey, You Should Probably Check Your Chatbot’s Privacy Settings

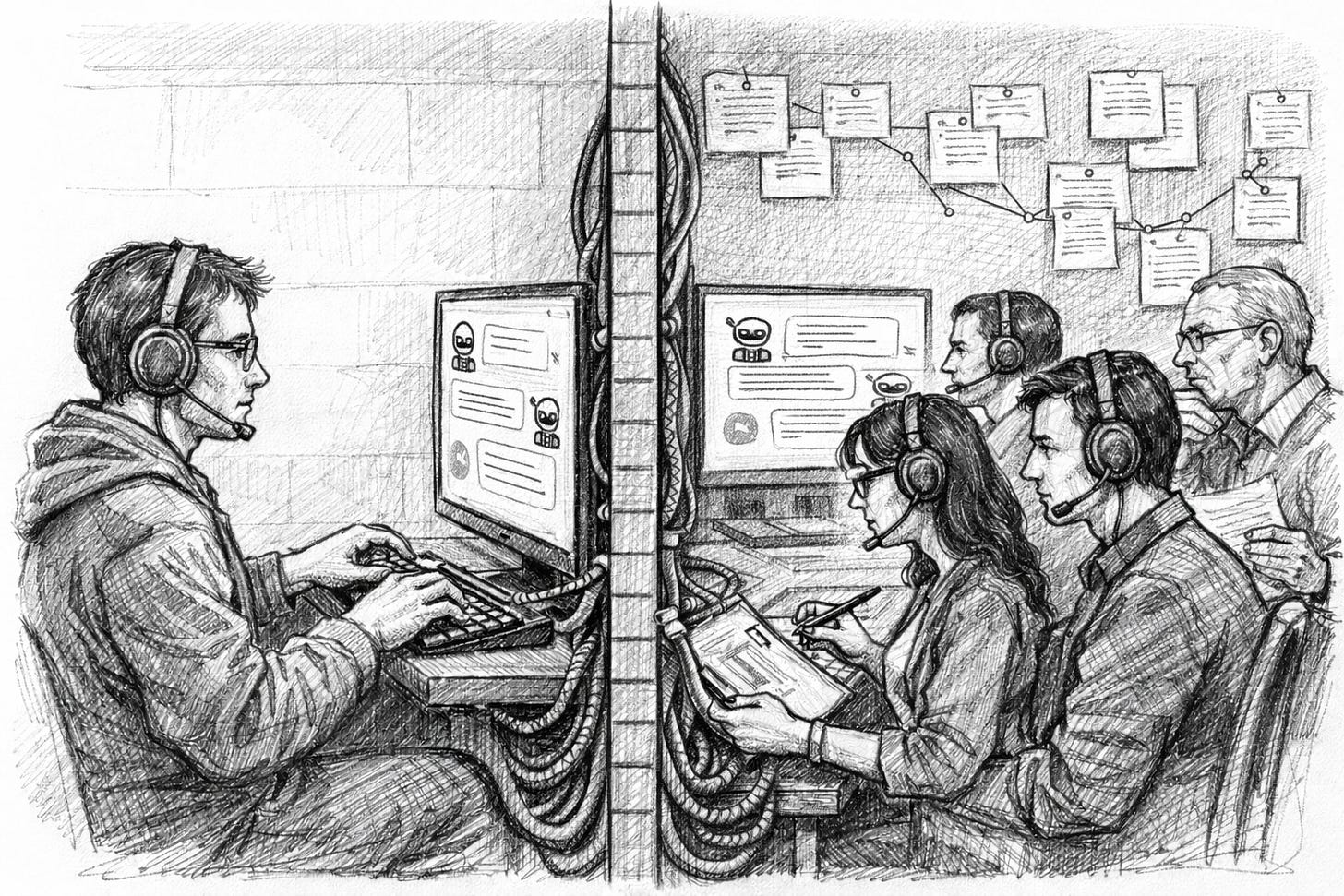

Did you know your conversations are default opted-in for use in training by the leading AI labs? Time to check the settings.

This week, I was surprised to learn that the world’s leading AI labs have granted themselves free rein to train on our conversations. I’ve since fixed the problem for my account, and you might want to as well.

Amazon, Anthropic, Google, OpenAI, Meta, and Microsoft have all built default settings that allow them to train on anything you input into the chatbot window, from medical records to open-hearted confessions. Unless you toggle the setting off, you’ve granted them the right to access all your AI interactions.

If you’re mostly using the bots for rudimentary work, that’s probably fine. But if you’re inputting financial, medical, or other personal information (I’m guilty of all the above), then it’s less advisable.

“You’re opted-in by default,” Dr. Jennifer King, privacy and data policy fellow at Stanford’s Institute for Human-Centered Artificial Intelligence, told me. “They are collecting all of your conversations.”

Dr. King is the lead author of a viral paper that examined these companies’ data collection processes last year. The paper, called User Privacy and Large Language Models, highlights a privacy issue that will only become more urgent as people trust increasingly capable chatbots with more sensitive documents.

And given that these AI research outfits had exhausted almost all available data on the internet (and elsewhere), new data coming in via our conversations with the chatbots is particularly precious. “It’s very valuable“ said King. “The research today shows that if you keep retraining on AI-generated content, you end up with model collapse.”

The AI model builders do install guardrails to ensure personal information isn’t spit out by chatbots. And many strip identifying information out of training sets. But with the amount of data involved, it’s a risky process to trust.

How to Opt-Out

The chatbots’ settings are somewhat hard to find and discern. So in the spirit of full disclosure, I’ll lay it out here, at least for ChatGPT, Claude, and Gemini:

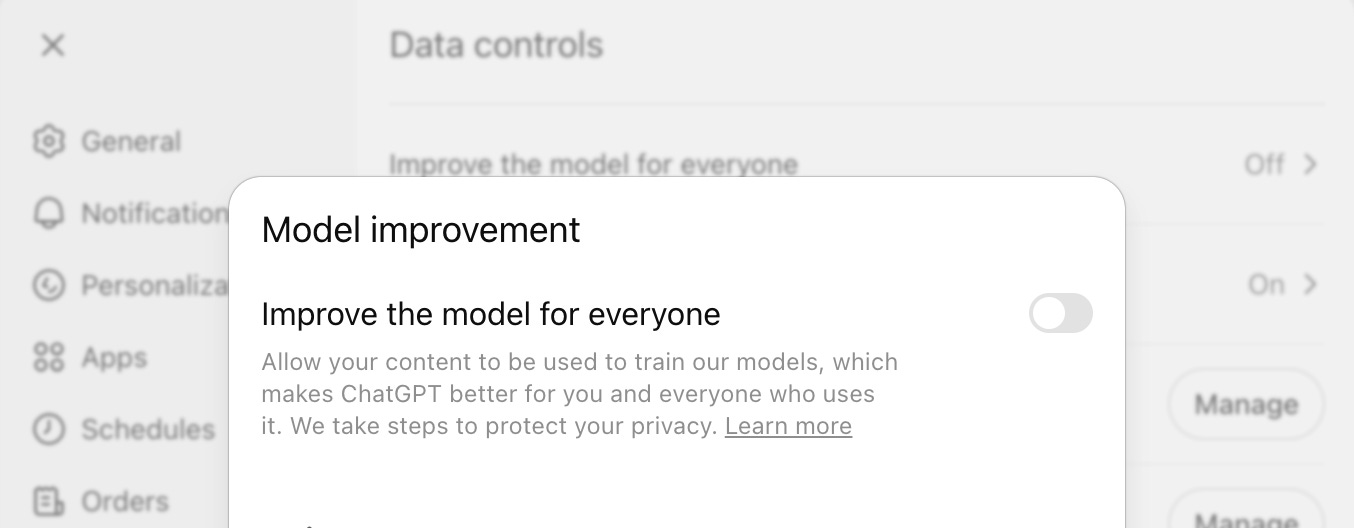

ChatGPT

Within the Data Controls section in ChatGPT’s settings, the “Improve the model for everyone” opts your conversations in for training. Turn that off and the company no longer has your permission to train on your chats.

Claude

Inside Claude’s Privacy section, the “Help Improve Claude” setting can be toggled off to remove your conversations from training.

Gemini

And for Gemini, head to the Activity section within settings and turn that off to prevent training:

The squishy opt-out language is “obscuring what they’re really doing by appealing to your social good, essentially,” Dr. King said. ”It frames it as a trade-off, that you’re going to make this thing worse if you don’t comply.”

Now, at least you have the information. Go do with it what you will.

What Else I’m Reading, Etc.

Anthropic and the Pentagon are back in talks [CNBC]

OpenAI’s new GPT 5.4 model can natively use computers [TechCrunch]

Yes, we can tell when you’re writing with AI [WSJ]

Cluely CEO admits he lied about $7M revenue figure [TechCrunch]

The McDonald’s CEO couldn’t bring himself to scarf his burger [New York Times]

Survivors of the Tahoe backcountry avalanche share what happened [New York Times]

This Week On Big Technology Podcast: Pentagon Insider: What’s Next For Anthropic and The Department of War — With Michael Horowitz

Michael Horowitz is the former deputy assistant secretary of defense for force development and emerging capabilities at the Department of Defense, and currently a professor at the University of Pennsylvania. Horowitz joins Big Technology to discuss the Anthropic–Pentagon rupture and what it signals about how the U.S. government wants to use frontier AI. Tune in to hear his inside view on how models like Claude actually get deployed in defense workflows, why a contract fight over “mass surveillance” language escalated, and what the trust breakdown says about the future of AI partnerships with the state. We also cover autonomous weapon systems vs. “fully autonomous weapons,” what today’s AI can and can’t do on the battlefield, and how AI is likely to reshape warfare over time. Hit play for a clear-eyed look at where Silicon Valley and the national security establishment collide—and what happens next.

You can listen on Apple Podcasts, Spotify, or your podcast app of choice

Thanks again for reading. Please share Big Technology if you like it!

And hit that Like Button it won’t collect your deepest darkest thoughts, I promise

My book Always Day One digs into the tech giants’ inner workings, focusing on automation and culture. I’d be thrilled if you’d give it a read. You can find it here.

Join Big Technology’s Private Discord Server!

Where we’ll talk about this story, the latest in AI, the week’s podcast, and plenty more. You can sign up via the link below: