Most AI Training Is Moving To Reinforcement Learning, Scale AI Says

Now that the models are smart enough, reinforcement learning that helps them complete tasks is the focus.

Scale AI, the AI data company that helps DeepMind, Meta, and Apple train their models, is now mostly working on reinforcement learning, a method that trains AI models to complete tasks, as opposed to traditional LLM training, which makes them more book-smart.

The shift is a stunning evolution in the direction of large language model development, indicating that traditional training has hit some limits while, at the same time, reinforcement learning — along with the agentic use cases it enables — is showing real promise.

“More than half of the training work we do involves reinforcement learning,” Chetan Rane, Scale’s head of product for agents and reinforcement learning environments, told me. “It was probably less than a quarter even six months ago.”

Traditional large language model training (also known as self-supervised learning) focuses on predicting patterns, while reinforcement learning gives models goals and lets them figure out how to achieve them. Current AI models are smart enough at the base level to attempt these tasks, and reinforcement learning is the method they’re using to figure out how to complete them proficiently. This task-based training is meant to transform AI from giving text or voice-based responses to doing things online.

For each task a model tackles, trainers must build highly-specialized, reinforcement learning ‘environments’ that mimic the steps the model will take when it’s let loose on the web. Teaching an AI model to navigate banking websites, for instance, involves building out user flows that account for almost every conceivable step and then setting the AIs loose within them. When the AIs figure out how to achieve their goals, the data is fed back into the models’ DNA — or weights — and it becomes more capable of accomplishing them.

There are some real limits on reinforcement learning though, including that the method tends to not generalize well, or handle scenarios it hasn’t seen before, even if its absolute level of intelligence goes up. “There’s proof that some amount of generalization happens,” Rane said. “But there are fundamental limitations.”

As AI training with reinforcement learning moves forward, expect the divergence between AI models to grow as the AI labs decide which types of activities to emphasize. One AI model company may make a model that is really good at accounting tasks, for instance, while another might be good at booking concert tickets. This is potentially one place where the AI race will sort itself out, as certain models become better equipped to handle certain tasks.

“We’re seeing a lot more divergence today than frankly, we did a year ago,” Rane said, “when everyone was just trying to build basic intelligence and keep up with one another. Now it’s clear that some labs are going more after the coding space, others are going more after the enterprise, others may be going after consumer.”

As these models roll out, don’t expect the fundamental economics of AI infrastructure to change though. Rane expects them to use much more compute, explaining that there’s a large number of calculations required for each attempt at a task. “RL requires much more compute than previous training paradigms,” he said.

How Texas Instruments Builds Foundational Semiconductors and What They Enable (sponsor)

Jeff Morroni is the CTO for power at Texas Instruments. Morroni joins us live on location as Texas Instruments opens its brand-new semiconductor fab in Sherman, Texas. In this conversation, Morroni goes into depth about the different kinds of semiconductors that exist and what job they do, what the semiconductors built in Sherman are used for, and how innovation is at the core of TI’s technology development and manufacturing capabilities. Tune in for a fascinating, on-the-ground look at the reality of building the pivotal component in electronics today, and how TI is working to expand capacity now and for the future.

What Else I’m Reading, Etc.

OpenAI raised $110 billion from Nvidia, Amazon, and Softbank [CNBC]

Anthropic says no to the Pentagon [WSJ]

Jack Dorsey cuts nearly half of Block’s staff, cites AI, predicts others will follow [SFGate]

The Citrini note that crashed markets [Citrini Research]

Citadel’s takedown of the Citrini note [Citadel Securities]

AI’s Dr. Manhattan Syndrome [Person Familiar]

World Economic Forum CEO out after Epstein ties revealed [New York Times]

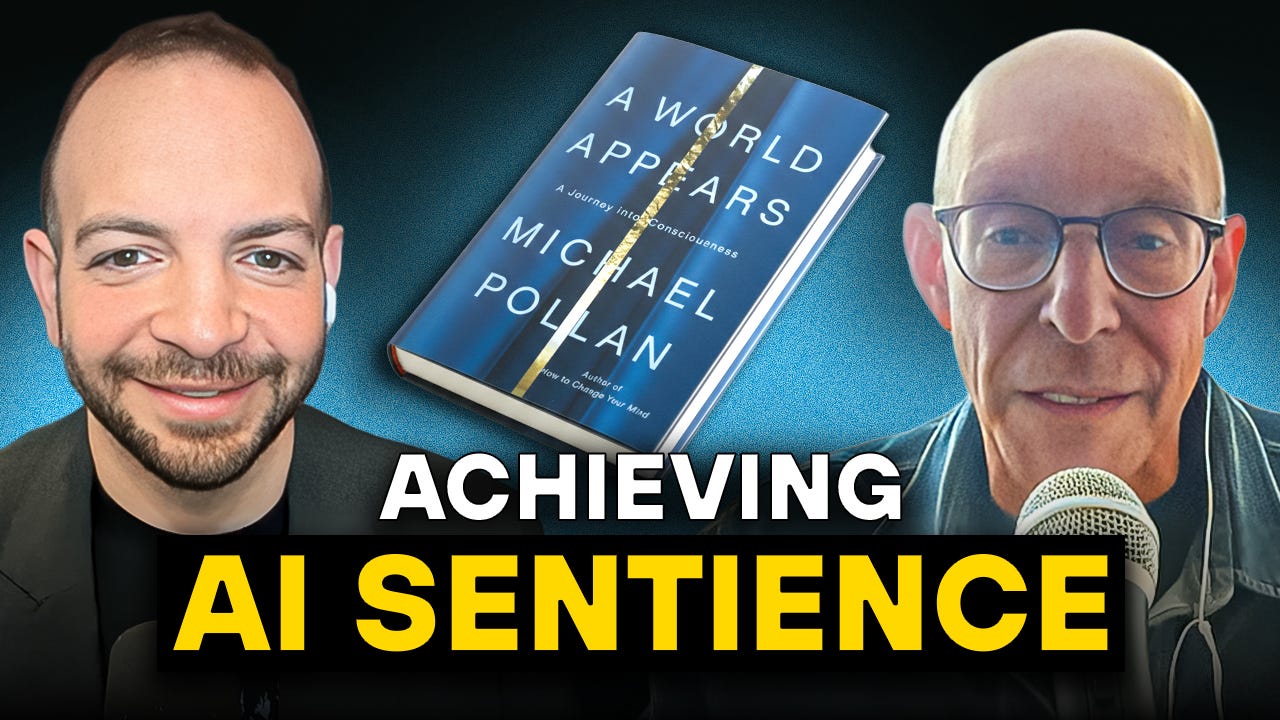

This Week On Big Technology Podcast: Can AI Become Conscious? — With Michael Pollan

Michael Pollan is the author of A World Appears: A Journey into Consciousness. Pollan joins Big Technology Podcast to discuss whether AI can ever become conscious and what that question reveals about the nature of mind. Tune in to hear a nuanced debate about whether consciousness is computable, where today’s LLMs fall short, and how researchers might actually test machine consciousness in the future. We also cover materialism vs. spirituality, the “hard problem” of consciousness, psychedelic experiences, and the emerging science of plant sentience. Hit play for a thoughtful, surprising conversation that brings the AI consciousness debate back down to earth while opening up some of its strangest possibilities.

You can listen on Apple Podcasts, Spotify, or your podcast app of choice

Thanks again for reading. Please share Big Technology if you like it!

And hit that Like Button to help reinforce Big Technology’s ability to keep sending this newsletters :)

My book Always Day One digs into the tech giants’ inner workings, focusing on automation and culture. I’d be thrilled if you’d give it a read. You can find it here.

Join Big Technology’s Private Discord Server!

Where we’ll talk about this story, the latest in AI, the week’s podcast, and plenty more. You can sign up via the link below: